It’s holiday break! That means I have more discretionary time for topics such as… Juniper interface-ranges! Yay! Right?

Ok, not the most exciting topic in the world, but hear me out — I think there’s a pretty good use case for using Juniper interfaces-ranges, which is a Junos feature that I think really stands out from other NOS’ that I’ve had exposure to. What is the use case? Simple: campus switch port configurations.

What is a Juniper interface range?

Let’s start by explaining what it is and is not, because if you’re coming from other vendors, the concept may be foreign and/or you may conflate it with features from others.

An interface-range is a logical construct in a Juniper configuration that aggregates multiple port configurations and places them into one configuration. Here is an example:

interfaces {

interface-range workstations201 {

member ge-0/0/0;

member ge-0/0/1;

unit 0 {

family ethernet-switching {

interface-mode access;

vlan {

members 201;

}

}

}

}

interface ge-0/0/0 {

description "Workstation 1";

}

interface ge-0/0/1 {

description "Workstation 2";

}

}

Here I have two interfaces that are members of the interface-range ‘workstations201’; they both are access ports and both belong in VLAN 201. Here’s the configuration if I were to separate out the interfaces into separate configurations:

interfaces {

interface ge-0/0/0

description "Workstation 1";

unit 0 {

family ethernet-switching {

interface-mode access;

vlan {

members 201;

}

}

}

}

interface ge-0/0/1

description "Workstation 2";

unit 0 {

family ethernet-switching {

interface-mode access;

vlan {

members 201;

}

}

}

}

}

As you can see above, interface configurations are often repeated multiple times if you configure them one-by-one, but interface-ranges simplify port configurations and reduce the number of lines of these repeated configurations (in a 48-port switch with each port the same, theoretically using an interface-range could remove up-to 384 lines). If you need to change a port to a different VLAN or different configuration, simply delete the interface’s membership from one interface-range and place it in another.

What interface-ranges are not: CLI commands for making mass interface changes. For that, Juniper has the wildcard range command. For more info on that here.

Configurations Beyond the Individual Interface

So reducing the number of lines of configuration is great, but so what? If you come from the world of other vendors, you’re already used to the idea of keeping port configurations and their protocols all in the interface’s configuration, so configuring edge ports this way isn’t going to offer you much.

In the Junos world though, interface’s don’t hold all the configurations for the ports. For example, if you want to configure a voice/auxillary VLAN, that configuration on Junos (well, in post ELS change*) is in the switch-options stanza of the configuration. Sure you could configure switch-options like this and have all access ports configured for VoIP like this:

switch-options {

voip {

interface access-ports {

vlan PHONES;

forwarding-class expedited-forwarding;

}

}

}

But what if you have devices that will utilize the voice/auxiliary VLAN, but you want them specifically in a separate VLAN than your voice/auxiliary VLAN? You would have to configure each port specifically for that protocol like this:

switch-options {

voip {

interface ge-0/0/0 {

vlan PHONES;

forwarding-class expedited-forwarding;

}

interface ge-0/0/1 {

vlan PHONES;

forwarding-class expedited-forwarding;

}

}

}

If you needed to make a change for an interface, and you’re doing this via CLI, managing these changes could get daunting.

Let take another place where configurations aren’t located in the same location: spanning-tree. It’s not enough in Junos to just turn on spanning-tree on all ports; you need to specify which ports are edge (to block BPDUs) and which ones are a downstream switch (ignore BPDUs). Often I have seen Junos configurations for campus gear look like this:

protocols {

rstp {

interface all;

bpdu-block-on-edge;

}

}

But from my experience, all that does is turn on RSTP, and ‘bpdu-block-on-edge’ does nothing because no ports are designated as ‘edge’. In order to accomplish the above, you need to designate edge ports and ports for downstream switches (no-root-port):

protocols {

rstp {

bpdu-block-on-edge;

interface ge-0/0/0 {

edge;

}

interface ge-0/0/1 {

no-root-port;

}

}

}

Great, so now you’re having to manage port configurations in three stanzas, and you’re adding a hundreds of lines of configurations to each switch configuration. WTH, Juniper?

If only there was a way to simplify this…

Where Interface-Range Shines

Interface-range does simplify all of this and reduce the lines configuration! Instead of blabbing on and on about this, let me just show you exactly how this is simplified:

protocols {

rstp {

interface all;

interface workstations201 {

edge;

}

bpdu-block-on-edge;

}

}

switch-options {

voip {

interface workstations201 {

vlan PHONES;

forwarding-class expedited-forwarding;

}

}

}

Using interface-range, we can treat the interface-range as an interface object and apply configurations to it just like you would individual interfaces. For ports needing voice/aux VLANs, we can configure only the ports needing it; for spanning-tree, we can designate edge and no-root-port more simply.

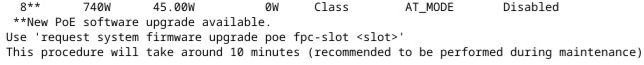

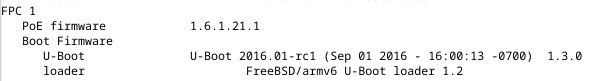

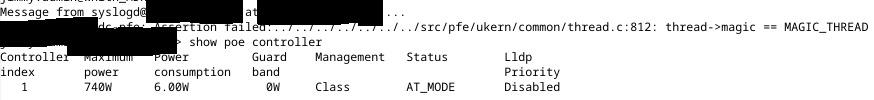

Another great example: what if you needed to quickly power cycle all IP speakers? Sure, you could set the following in CLI:

wildcard range set poe interface ge-0/0/[0-2,5,10,18] disable commit

But what if you’re working in a virtual-chassis, and your wildcard range command won’t work to get all the ports in one line? Using interface-range, you can get them all in one line like this:

set poe interface ip_speakers disable commit

Then BAM! You’ve turned off PoE and you can just rollback the config (or delete it, whatever floats your boat), and the IP speakers are rebooted.

Interface-range, for me, just seems like a more efficient way of managing ports configurations (even if you automate). Here’s an example that brings it all together:

interfaces {

interface-range workstations201 {

member ge-0/0/0;

member ge-0/0/47;

unit 0 {

family ethernet-switching {

interface-mode access;

vlan {

members 201;

}

}

}

}

interface-range ip_speakers {

member ge-0/0/46;

unit 0 {

family ethernet-switching {

interface-mode access;

vlan {

members 401;

}

}

}

}

interface-range wireless {

member ge-0/0/1;

native-vlan-id 100;

unit 0 {

family ethernet-switching {

interface-mode trunk;

vlan {

members 201 301;

}

}

}

}

interface-range downstream_switches {

member ge-0/0/2;

native-vlan-id 100;

unit 0 {

family ethernet-switching {

interface-mode trunk;

vlan {

members all;

}

}

}

}

ge-0/0/0 {

description workstations201;

}

ge-0/0/1 {

description wireless;

}

ge-0/0/2 {

description switch_02;

}

ge-0/0/46 {

description ip_speakers;

}

ge-0/0/47 {

description workstations201;

}

}

protocols {

rstp {

interface all;

interface workstations201 {

edge;

}

interface wireless {

edge;

}

interface downstream_switches {

no-root-port;

}

interface ip_speakers {

edge;

}

bpdu-block-on-edge;

}

}

switch-options {

voip {

interface workstations201 {

vlan PHONES;

forwarding-class expedited-forwarding;

}

}

}

Why Not Use Junos Groups Instead of Interface-Range?

From my experience, and from I can tell from working on campus switch configurations, groups don’t seem to offer the simplicity for port configurations like interface-range does, and, from all that I can gather, I cannot configure VLANs for interfaces and interface modes with the group feature like I can with interface-range. When dealing with hundreds of campus switches and VC stacks, with all the port additions and changes, it just seems like interface-range is best approach for campus switching.

That said, once you get into the distribution and core layers, I’m less certain about the applicability for interface-range, but groups seem to be better suited here.

Edit (20191124.1945) – For a perspective from some people with more experience with apply-groups than myself, check out this post I made on the Juniper subreddit. There’s even examples for how to approach this from an apply-groups model. That said, I still think interface-range is a better approach for campus switching simply for the operational benefits you get with using them.

Caveats/Miscellaneous

There are a few caveats to keep in mind with interface-range, so I’ll note them quickly:

You can’t stage interface-ranges. An interface-range needs a member in order for it exist, so you can’t preconfigure them (although perhaps you could comment them out), and if your interface-range loses all members, you’ll need to either delete them or comment them out.Edit (20191124.1945) – This isn’t entirely true; one comment on Reddit suggests creating fake interfaces to stage them, but I’m not entirely sure if there aren’t any effects with that.- There are two ways to add members: member or member-range. I prefer to avoid member-range because if an interface changes in the range, you have to break up the range (IIRC). That said, if ports are static, you could use member-range or member (wildcard) statements to configure members.

- I have had the CLI bark, but still commit, when interfaces were blank/missing, but had configurations in the interface-range area. I should verify this, but as of the time of writing this, this is what I recall.

- Network admins/engineers who come from other vendors can get confused with interface-ranges, especially if you mix interface-ranges and standalone interface configurations. They make think something is missing and so forth; just make sure to brief them on it.

Final Thoughts

Junos has a lot of ways of accomplishing what you want, so what I’ve demonstrated here is just one way of accomplishing interface configurations; but from my experience, this seems like the more efficient way for campus switches.

Please let me know if there any mistakes in here or if you have a different way of using interface-ranges. Would love to be corrected or see other uses!

Thanks for reading.

* Enhanced Layer 2 Switching. Junos changed how configurations were done a few years back. Follow the link for more info.